| CATEGORII DOCUMENTE |

| Bulgara | Ceha slovaca | Croata | Engleza | Estona | Finlandeza | Franceza |

| Germana | Italiana | Letona | Lituaniana | Maghiara | Olandeza | Poloneza |

| Sarba | Slovena | Spaniola | Suedeza | Turca | Ucraineana |

Environment and Consumption

If the life-supporting ecosystems of the planet are to survive for future generations, the consumer society will have to dramatically curtail its use of resourcespartly by shifting to high-quality, low-input durable goods and partly by seeking fulfillment through leisure, human relationships, and other nonmaterial avenues.

Alan Durning, How Much Is Enough?

A man is rich in proportion to the things he can afford to let alone.

Henry David Thoreau, Walden

The first sweetened cup of hot tea to be drunk by an English worker was a significant historical event, because it prefigured the transformation of an entire society, a total remaking of its economic and social basis. We must struggle to understand fully the consequences of that and kindred events, for upon them was erected an entirely different conception of the relationship between producers and consumers, of the meaning of work, of the definition of self, of the nature of things.

Sidney Mintz, Sweetness and Power

All animals alter their environments as a condition of their existence. Human beings, in addition, alter their environments as a condition of their cultures, that is by the way they choose to obtain food, produce tools and products, and construct and arrange shelters. But culture, an essential part of human adaptation, can also threaten human existence when short-term goals lead to long-term consequences that are harmful to human life. Swidden agriculture alters the environment but not as much as irrigation agriculture and certainly not as much as modern agriculture with its use of chemical fertilizers, pesticides, and herbicides. Domesticated animals alter environments, but keeping a few cattle for farm work or cows for dairy products does far less damage than maintaining herds of thousands to supply a meat-centered diet.

The degree to which environments are altered and damaged is determined in part by population and in part by the technology in use. Obviously, the more people in a given

area, the more potential there is for environmental disruption. Tractors and bulldozers alter the environment more than hoes or plows. But the greatest factor in environmental alterationin the use of raw materials, the use of nonhuman energy, and the production of wasteis consumption. Because of our level of consumption, the average American child will do twice the environmental damage of a Swedish child, three times that of an Italian child, thirteen times that of a Brazilian child, thirty-five times that of an Indian child, and 280 times that of a Chadian or Haitian child (Kennedy 1993:32).

The

Mathis Wackernagel and William Rees (1996) estimate that four to six hectares of land are required to maintain the consumption level of the average person from a high-consumption country. The problem is that in 1990, worldwide there were only 1.7 hectares of ecologically productive land for each person. They conclude that the deficit is made up in core countries by drawing down the natural resources of their own countries and expropriating the resources, through trade, of peripheral countries. In other words, someone has to pay for our consumption levels, and it will either be our children or inhabitants of the periphery of the world system.

Our consumption of goods obviously is a function of our culture. Only by producing and selling things and services does capitalism in its present form work, and the more that is produced and the more that is purchased the more we have progress and prosperity. The single most important measure of economic growth is, after all, the gross national product (GNP), the sum total of goods and services produced by a given society in a given year. It is a measure of the success of a consumer society, obviously, to consume.

The production, processing, and consumption of commodities, however, require the extraction and use of natural resources (wood, ore, fossil fuels, and water) and require the creation of factories and factory complexes, which create toxic byproducts, while the use of commodities themselves (e.g., automobiles) creates pollutants and waste. Yet of the three factors to which environmentalists often point as responsible for environmental pollutionpopulation, technology, and consumptionconsumption seems to get the least attention. One reason, no doubt, is that it may be the most difficult to change; our consumption patterns are so much a part of our lives that to change them would require a massive cultural overhaul, not to mention severe economic dislocation. A drop in demand for products, as economists note, brings on economic recession or even depression, along with massive unemployment.

The maintenance of perpetual growth and the cycle of production and consumption essential in the culture of capitalism does not bode well for the environment. At the beginning of Chapter 1 we mentioned that the consumer revolution of the late nineteenth and early twentieth centuries was caused in large part by a crisis in production; new technologies had resulted in production of more goods, but there were not enough people or money to buy them. Since production is such an essential part of the culture of capitalism, society quickly adapted to this crisis by convincing people to buy things, by altering basic

institutions, and even by generating a new ideology of pleasure. The economic crisis of the late nineteenth century was solved but at considerable expense to the environment in the additional waste that was created and resources that were consumed. At that time the world's population was about 1.6 billion, and those caught up in the consumer frenzy were a fraction of that total.

The global economy today faces the same problem it faced one hundred years ago, except that the world population has almost quadrupled. Consequently it is even more important to understand how the interaction between capital, labor, and consumption in the culture of capitalism creates an overproduction of commodities and how this relates to environmental pollution. To illustrate, let's take a quick look at the present state of the global automobile industry.

In capitalism competition between companies for world markets requires that they constantly develop new and improved ways to produce things and lower costs. In some industries, such as textiles, as we saw in Chapter 2, competition requires seeking cheaper sources of labor; in others, such as the automobile industry, it means creating new technologies that replace people with machines to lower labor costs. Twenty years ago the production of one automobile took hundreds of hours of human labor. Today a Lexus LS 400 requires only 18.4 hours of human labor, Ford Motor Company produces several cars with 20.0 hours of human labor, and General Motors lags behind at about 24.8 hours per car (Greider 1997:110-112).

In addition to reducing the number of jobs available to people, advanced productive technology creates the potential for producing ever more cars, regardless of whether there are people who want to buy them. In 1995 the automobile industry produced over 50 million automobiles, but there was a market for only 40 million. What can companies do? Obviously they can begin to close plants or cut back on production, which some do. In the 1980s some 180,000 American auto workers lost their jobs because of cutbacks and factory shutdowns. But the producers, of course, hope the problem of selling this surplus is someone else's problem, so they continue to produce cars.

From the

perspective of the automobile companies and their workers, the preferred

solution to overproduction is to create a greater demand for automobiles. This

is difficult in core countries, where the market is already saturated with cars. In

the

But that is exactly the goal of automotive manufacturers and the nation-states that operate to help them build and sell their products. Not only would automobile makers in the core like to enter the Chinese market, the Chinese themselves plan to build an automobile industry as large as that of the United States, to produce cars for their own market and to compete in other markets as well. If Chinaor India, Indonesia, Brazil, or most of the rest of the peripheryeven approached the consumption rate of automobiles common in the core, the increased environmental pollution would be staggering. There would be not only massive increases in hydrocarbon pollution but also vastly increased demands for

raw materials, especially oil. And the overproduction dilemma is not unique to automobiles: the steel, aircraft, chemical, computer, consumer electronics, drug, and tire industries, among others, face the same dilemma.

The

environmental problem could be alleviated if consumers simply said 'enough

is enough' and stopped consuming as much as they do. Indeed, there has

been a number of social movements to convince people to consume less. But, as

previously noted, any reduction of consumption would likely cause severe

economic disruption. Furthermore, few are aware of how large a

reduction would have to be to effect a change. A study by Friends of

the Earth Netherlands asked what the consumption levels of the average Dutch

person would have to be in the year 2010 if consumption levels over the world

were equal and if resource consumption was sustainable. They found that consumption

levels would have to be reduced dramatically. For example, to reduce global warming

by the year 2010, people in the Netherlands would have to reduce carbon dioxide

emission by two-thirds; to accomplish that a Dutch person would have to limit the

use of carbon-based fuel to one liter per day, thus limiting travel to 15.5

miles per day by car, 31 miles per day by bus, 40 miles per day by train, or

6.2 miles per day by plane. A trip from

Thus significantly reducing our consumption patterns is no easy task. Consumption is as much a part of our culture as horse raiding and buffalo hunting were part of Plains Indian culture; it is a central element. Consequently there is no way to appreciate the problem of environmental destruction without understanding how people are turned into consumers, how luxuries are turned into necessities. That is, why do people choose to consume what they do, how they do, and when they do ?

Take sugar, for example. In 1997, each American consumed in his or her soft drinks, tea, coffee, cocoa, pastries, breads, and other foods sixty-six pounds of sugar (USDA 2000). In addition, Americans consume almost two hundred grams, or fifty-three teaspoonfuls, of caloric sweeteners each day (Gardner and Halweil 2000:31). Why? Liking the taste might be one answer. In fact, a predilection for sweets may be part of our biological makeup. But that doesn't explain why we consume it in the form of sugarcane and beet sugar and in the quantities we do. Then there is meat. Modern livestock production is one of the most environmentally damaging and wasteful forms of food production the world has known. Yet Americans eat more meat per capita than all but a few other peoples. Some environmentalists argue that we can change our destructive consumption patterns, if we desire. But is our pattern of consumption only a matter of taste and of choice, or is it so deeply embedded in our culture as to be virtually impervious to change?

To begin to answer this question, we shall examine the history of sugar and beef, commodities that figure largely in our lives but involve environmental degradation. The combination of sugar and beef is appropriate for a number of reasons:

1.

The

production and processing of both degrade the environment; furthermore, the

history of sugar production parallels that

of a number of other things we consume,

including coffee, tea, cocoa, and

tobacco, that collectively have significant environ

mental effects.

2. Neither is

terribly good for us, at least not in the quantities and form in which we

consume

them.

3.

Both have histories that closely tie them to the

growth and emergence of the capital

ist

world economy. They are powerful symbols of the rise and economic expansion

of capitalism; indeed,

they are a result and a reason for it.

4.

With the rise of the fast-food industry, beef and

sugar, fat and sucrose have become

the foundations of the

American diet, accounting for more than one-half of the ca

loric intake of North Americans and

Europeans (Gardner and Halweil 2000:15). In

deed, they are foundation foods of

the culture of capitalism symbolized in the

hamburger and Coke, a hot dog and

soda, and the fat and sucrose dessertice cream.

The Case of Sugar

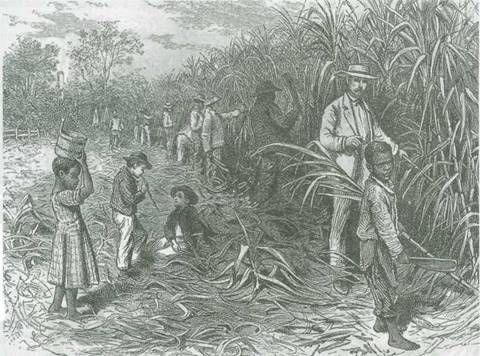

The history of sugar reveals how private economic interests, along with economic policies of the nation-state and changes in the structure of society combined to convert a commodity from a luxury good believed to have health benefits into a necessity with overall harmful health consequences. In the process, it vastly increased the exploitation of laborfirst in the form of slavery, then in migrant laborconverted millions of acres of forest into sugar productionin the process expelling millions from their landand changed the dietary habits of most of the world. It illustrates how our consumption patterns are determined in capitalism and why we engage in behavior that may be environmentally unsound and personally harmful. The story of sugar is an excellent case study of how the nation-state mediated interaction of the capitalist, the laborer, and the consumer produces some of our global problems.

Sugar Origins and Production

Sugarcane, until recently the

major source of sugar, was first domesticated in

Sugar production

alters the environment in a number of ways. Forests must be cleared to

plant sugar; wood or fossil fuel must be burned in the evaporation process; waste water is

produced in extracting sucrose from the sugarcane; and more fuel is burned in the

refining process. When

sugar house. Within a few decades

wood was so scarce that the government tried, in vain, to protect the

forests from the lumberjacks (Crosby 1986:96). The Guanche were also gone within a

century. When sugar production expanded in the seventeenth century, the sugar

refineries of

Sugar, therefore, like virtually all commodities, comes to us at a heavy environmental cost. Yet people did not always crave sugar. For that to happen, a luxury had to be converted into a necessity, a taste had to be created.

Uses of Sugar

By A.D. 1000, when sugar was grown in Europe and the

Sugar was also used for decoration, mixed with almonds (marzipan) and molded into all kinds of shapes, the decorations becoming central to celebrations and feasts. And it was used as a spice in cooking and, of course, as a sweetener. It was also used as a preservative. We still use sugar to preserve ham, and it is often added to bread to increase its shelf life. But through the seventeenth century, even with its diverse uses, it was an expensive luxury item reserved for the upper classes.

The Development of the Sugar Complex

As a luxury item, sugar brought considerable profits for those who traded in it. In fact, it was the value of sugar as a trade item in the fifteenth and sixteenth centuries that led Spain and Portugal to extend sugarcane production, first to the Atlantic Islands, then to the Caribbean islands, and finally to Brazil, from which, beginning in 1526, raw sugar was shipped to Lisbon for refining.

Modern economists like to talk about the spin-off effects of certain commodities, that is the extent to which their production results in the development of subsidiary industries. For example, production of automobiles requires road construction, oil and petroleum production, service stations, auto parts stores, and the like. Sugar production also produced subsidiary economic activities; these included slavery, the provisioning of the sugar producers, shipping, refining, storage, and wholesale and retail trade.

The increased demand for sugar in the

eighteenth and nineteenth centuries represented a boon for

The slave trade was a major factor

in the expansion of the sugar industries. Slaves from Europe and the Middle

East were first used on the Spanish and Portuguese plantations of the Canary Islands and Madeira, but by

the end of the fifteenth century slaves from

Money was to

be made also from the shipment of raw sugar to European refineries, more yet from

the wholesale and retail sale of sugar, and probably more yet from the sale by European

merchants of necessary provisions to the plantation owners. Investors in Europe,

especially

sugar plantations, the slave trade, long-distance shipping, wholesale and retail trade, and investment finance.

The Expansion of Sugar Consumption

It was not until the late

seventeenth century that sugar production and sales really began to influence

sugar consumption in

Why did people

in

Second, the benefits of sugar were widely touted by various authorities, notably popular physicians. Dr. Frederick Slare found sugar a venerable cure-all. He recommended that women include at their breakfast bread, butter, milk, and sugar. Coffee, tea, and chocolate were similarly 'endowed with uncommon virtues,' he said, adding that his message would please the West Indian merchant and the grocer who became wealthy on the production of sugar. Slare also prescribed sugar as a dentifrice, a lotion, and a substitute for tobacco in the form of snuff and for babies. Sidney Mintz (1985:107-108) said of Slare that while his enthusiasm for sugar is suspect, it is more than a curiosity because it relates to so many aspects of what was then still a relatively new commodity; furthermore, by stressing sugar's value as a medicine, food, and preservative, he was drawing additional attention to it.

Slare's enthusiasm for sugar was not an oddity; others shared his enthusiasm. No contemporary advertising executive could improve on the description of sugar by John Oldmixin, a contemporary of Slare:

One of the most pleasant and useful Things in the World, for besides the advantage of it in Trade, Physicians, and Apothecaries cannot be without it, there being nearly three Hundred Medicines made up with sugar; almost all Confectionery Wares receive their Sweetness and Preservation from it, most Fruits would be pernicious without it; the finest pastries could not be made nor the rich Cordials that are in the Ladies' Closets, nor their Conserves; neither could the Dairy furnish us with such a variety of Dishes, as it does, but by their Assistance of this noble Juice. (cited Mintz 1985:108)

A third reason that sugar consumption increased in the eighteenth century was its use as a sweetener for three other substances, all bitter and all technically drugs (stimu-

lants)tea, coffee, and cocoa. All of these were used in their places of origin without sugar, in spite of their bitterness. All three initially were drinks for the wealthy; by the time they were used by others they were generally served hot and sweetened.

Fourth, sugar's reputation as a luxury good inspired the middle classes to use it to emulate the wealthysugar was a sign of status. The powerful used sugar for conspicuous consumption, as a symbol of hospitality and the like. When sugar was a luxury the poor could hardly emulate these uses, but as the price declined and as its use expanded, sugar became available to the poor to use in much the same way as their social betters.

Finally, sugar

consumption increased because the government increased its purchase of

sugar and sugar products. After the capture of

Thus sugar production and consumption increased, as did the amount of land devoted to its production and the number of sugar mills and refineries, distilleries producing rum, and slaves employed in the whole process. Most important, the profits generated by the sugar trade increased dramatically.

The Mass Consumption of Sugar

By 1800, British sugar consumption had increased 2,500 percent since 1650 and 245,000 tons of sugar reached European consumers annually from the world market. By 1830 production had risen to 572,000 tons per year, an increase of more than 233 percent. By 1860, when beet sugar production was also rising, world production of sucrose increased another 233 percent to 1.373 million tons. Six million tons were produced by 1890, another 500 percent increase (Mintz 1985:73).

Two acts by

the British government helped spur this massive increase in sugar production and

consumption. First, the government removed tariffs on the imports of foreign

sugar. This made foreign sugar more accessible to British consumers and forced

domestic producers to lower their prices, making it affordable to virtually all

levels of British society. Second,

The lower price of sugar increased its use in tea and stimulated a dramatic rise in the production of preserves and chocolate. More important, it must have been apparent to sugar producers and sellers that there was a fortune to be made by increasing the availability of

sugar to the working mass of

Sidney Mintz's history of sugar reveals how much social, political, and economic power had to do with increased sugar consumption. Planters, slavers, shippers, bankers, refiners, grocers, and government officials, all profiting in one way or another from increased sugar consumption, exercised power to support the rights and prerogatives of planters, the maintenance of slavery, the availability of sugar and its products (molasses, rum, preserves) and products associated with it (tea, coffee, cocoa), and to supply it to the people at large at prices they could afford. Thus the consumption of sugar was hardly just a matter of tasteit had to do with investments, taxes, the dispensation of sugar through government agencies, and a desire to emulate the rich, among other things. It also had to do with convenience and the changes in household structure, labor, and diet that accompanied the Industrial Revolution.

Rural workers

in

As a result,

the diet of the urban working class and poor was transformed to one dominated by

tea, sugar, store-bought white bread, and jam. Hot tea replaced vegetable

broth. Jams (50-65 percent sugar) were cheaper than butter to put on bread,

were easily stored, and could be left open on shelves for children to be spread on

bread in the absence of adults. In other words, the cultural and social

constraints of time and cost created in the urban, industrial setting

combined with the convenience of sugar and the prodding of those who

profited from its sale to shape the diet of the British working class. It was

an ideal

arrangement, for, as E. P. Thompson (1967) noted, after providing profits to

plantation and refinery investors, sugar provided the bodily fuel for the

working people of

Modern Sugar

Sidney Mintz (1985:180-181) suggested that the consumption of goods such as sugar is the result of profound changes in the lives of working people, changes that made new forms of foods and of eating seem 'natural,' as new work schedules, new sorts of labor, and new conditions of life became 'natural.' This does not mean that we lack a choice in what we consume, but that our choice is made within various constraints. We may have a choice between a McDonald's hamburger and a Colonel Sanders chicken leg during a half-hour lunch break. The time available acts to limit our choice, removing, for example, the option of a home-cooked vegetarian lunch.

Sugar has

become, as it did for the nineteenth-century British laborer, a mainstay of the fast-food diet in the

Thus, sugar fits our budgets, our work schedules, and our psychological needs while at the same time generating monetary profits and growth. As Mintz (1985:186) put it, sugar

[s]erved to make a busy life seem less so; in the pause that refreshes, it eased, or seemed to ease, the changes back and forth from work to rest; it provided swifter sensations of fullness or satisfaction than complex carbohydrates did; it combines easily with many other foods, in some of which it was also used (tea and biscuit, coffee and bun, chocolate and jam-smeared bread)No wonder the rich and powerful liked it so much, and no wonder the poor learned to love it.

The Story of Beef

The story of beef is very much

like that of sugar, except that livestock breeding has been indicted for even

greater environmental damage than sugar production, largely because of the vast

amount of land needed to raise cattle. As an agricultural crop, sugar is quite

efficient; while it has little nutritional value, it is possible to get about

eight million calories from one acre of sugarcane; to get eight million

calories of beef requires 135 acres. In addition, much of the beef we eat

is grain-fed to produce the marbling of fat that makes it choice grade and

brings the highest prices; as mentioned earlier, 80 percent of the grain produced in

the

Cattle raising

consumes a lot of water. Half the water consumed in the

Cattle

raising has also been criticized for its role in the destruction of tropical forests.

Hundreds of thousands of acres of tropical forests in

carbon dioxide and contributes significantly to global warming. In addition, with increasing amounts of fossil fuel needed to produce grain, it now takes a gallon of gasoline to produce a pound of grain-fed beef.

Much of the

rangeland of the

The same problems are occurring in

areas of

In addition,

beef is terribly inefficient as a source of food. By the time a feedlot steer in the

Americans are

among the highest meat consumers in the world and the highest consumers of

beef. Over 6.7 billion hamburgers are sold each year at fast-food restaurants

alone. Furthermore, we are exporting our taste for beef to other parts of the

world. The Japanese,

who in the past consumed only one-tenth the amount of meat consumed by Americans, are increasing their consumption of

beef. McDonald's sells more hamburgers in

Marvin Harris

(1986) suggested that 'animal foods play a special role in the nutritional

physiology of our species.' He pointed out that studies of gathering and

hunting societies reveal that 35 percent of the diet comes from meat, more

than even Americans eat, and that this dietary pattern has existed for hundreds

of thousands years. Many cultures have a special term for 'meat

hunger.' The Ju/wasi of

Historically few societies, however, have made meat the center of their diet. If we look around the world, we find that most diets center on some complex carbohydrate-rice, wheat, manioc, yams, taroor something made from thesebread, pasta, tortillas,

and so on. To these are added some spice, vegetables, meat, or fish, the combination giving each culture's food its distinctive flavor. But meat and fish are generally at the edge, not the center, of the meal (Mintz 1985). Moreover, whether or not we just like meat, why do American preferences run to beef? Anthropologists Marvin Harris and Eric Ross (1987b) have some interesting answers that may help us understand why, in spite of the environmental damage our beef consumption causes, we continue to eat it in such quantities. The answers involve understanding the relationships among Spanish cattle, British colonialism, the American government, the American bison, indigenous peoples, the automobile, the hamburger, and the fast-food restaurant.

The Ascendancy of Beef

The story of the American

preference for beef begins with the Spanish colonization of the

In

All the wealth of these inhabitants consists in their animals, which multiply so prodigiously that the plains are covered with them in such numbers that were it not for the dogs that devoured the calves. .. they would devastate the country. (cited Rifkin 1992:49)

In colonial

The Emergence of the American Beef Industry

On the eve of the Industrial

Revolution,

TABLE 7.2

|

Year |

Beef |

Pork |

Year |

Beef |

Pork |

Year |

Beef |

Pork |

|

1998 |

64.9 |

49.1 |

1977 |

125.9 |

61.6 |

1950 |

63.4 |

69.2 |

|

1997 |

63.8 |

45.6 |

1975 |

120.1 |

54.8 |

1940 |

54.9 |

73.5 |

|

1996 |

65.0 |

49.9 |

1970 |

113.7 |

66.4 |

1920 |

59.1 |

63.5 |

|

1993 |

61.5 |

48.9 |

1960 |

85.1 |

64.9 |

1900 |

67.1 |

71.9 |

|

1990 |

64.0 |

46.4 |

|

|

|

|

|

|

See also Ross 1980:191; Bureau of Census, 1990, 1993, 1994; USDA/NASS Agricultural Statistics, 2000, https:/ /www.usda.gov/nass/pubs/agr00/acro00.htm

meat. Eric Ross (1980) suggested that the motivation

was not simply to get food but to keep meat

prices low in order to keep wages low and allow industry in

Who was eating all this meat? It was not the working class, whose breakfast consisted of little more than bread, butter or jam, and tea with sugar and whose dinner might include a meat byproduct, such as Liebig's extract (made from hides and other residue), or inferior cuts. The gentry, however, apparently consumed vast quantities. Beef, in fact, had been for some time the choice of the British well-to-do. For example, in 1735 a group of men formed the Sublime Society of Beef Steaks, most renowned for the invention of the sandwich by one of its members. The society consisted largely of members of the British elite but also included painters, merchants, and theatrical managers. It existed until 1866. Twice a year the group would meet for dinner, at which, according to the society's charter, 'beef steaks shall be the only meat for dinner' (Lincoln 1989:85).

Here is one description, dating from 1887, of a typical breakfast table of the British nobility (Harris and Ross 1987b:35-36):

In a country house, which contains, probably, a sprinkling of good and bad appetites and digestions, breakfasts should consist of a variety to suit all tastes, viz.: fish, poultry, or

game, if in season; sausages, and one meat of some sort, such as mutton cutlets, or fillets of beef; omelets, and eggs served in a variety of ways; bread of both kinds, white and brown, and fancy bread of as many kinds as can conveniently be served; two or three kinds of jam, orange marmalade, and fruits when in season; and on the side table, cold meats such as ham, tongue, cold game, of game pie, galantines, and in winter a round of spiced beef.

The army and navy consumed enormous amounts of meat, each sailor and soldier getting by regulation three-fourths of a pound of meat daily; in fact, the diet of the military was vastly superior to that of the bulk of the population. Between 1813 and 1835 the British War Office contracted for 69.6 million pounds of Irish salted beef and 77.9 million pounds of Irish salt pork; as Harris and Ross (1987:37-38) noted, Irishmen were able to eat the meat of their own country only by joining the army of the country that had colonized theirs. Furthermore, the British military, by distributing rum and meat to their men, helped to subsidize both the sugar and meat industries.

Laying largely untapped in the

latter half of the nineteenth century, by either the British or the Americans, were the American Great Plains and the vast

herds of

But cattle

traders faced three problems in trying to make a profit from longhorn cattle. The first problem was shipping

cattle to areas in the

The problem

of transporting cattle to the Midwest and East was solved by a young entrepreneur, Joseph McCoy, who

convinced the Union Pacific Railway to construct a siding and cattle pen at its

remote depot in

But as the

demand for meat, leather, and tallow grew, more land was needed for cattle; the

plains, once considered the

Cattlemen,

Eastern bankers, the railroads, and the U.S. Army believed the solution to both

problems could be effected by the extermination of the buffalo, and they joined

in a systematic campaign to that end. In a period of about a decade, from

1870 to 1880, in one of the world's greatest ecological disasters, buffalo

hunters ended 15,000 years of continuous existence on the plains of the

American bison, reducing herds of millions to virtual extinction. Stationed in

These men have donemore to settle the vexed Indian question than the entire regular army has done in the last thirty years. They are destroying the Indians' commissary; and it is a well-known fact that an army losing its base of supplies is placed at a great disadvantage. Send them powder and lead if you will; but for the sake of lasting peace let them kill, skin, and sell until the buffalo is exterminated. Then your prairies can be covered with speckled cattle and the festive cowboy who follows the hunter as a second forerunner of an advanced civilization, (cited Wallace and Hoebel 1952:66)

With the

buffalo went the Indians of the Plains. Their major food and source of ritual and

spiritual power removed, they were soon vanquished and confined to reservations,

with land granted to them in earlier treaties with the

In one of the

great ironies of history, cattlemen made fortunes selling beef to the

The final

problem in the story of

As a consequence of the merger of cattle and corn in the 1870s, British banks were pouring millions into the American West. They formed the Anglo-American Cattle Com-

pany Ltd. with 70,000 of

capital; then the Colorado Mortgage and Investment Company of

The takeover

of the West by the British so alarmed some Americans that in the 1884 presidential

election, both parties included planks that would limit 'alien

holdings' in the

In response to both British tastes and midwestern farm interests, the U.S. Department of Agriculture (USDA) developed a system of grading beef that awarded the highest gradeprimeto the beef with the most fat content, choice grade to the next fattiest, select grade to the next, and so on. Thus the state participated in creating a system that inspired cattle raisers to feed cattle grain and add fat because it brought the best price, while at the same time communicating to the consumer that since it was most expensive the most marbled cuts of beef must be the best.

The federal inspection and grading standards also aided another important sector of the beef industry, the meat packers. Beef packers wanted to centralize their operations, to bring live animals to one area to be butchered, but most states had laws that required inspection of live animals twenty-four hours before slaughter. Butchers were opposed to the centralized slaughter of beef because, since most animals were slaughtered and butchered locally, centralizing the operation would put many of them out of business. The beef packing industry lobbied successfully to convince Congress to pass a federal meat inspection system that, unlike state inspection systems, would not affect out-of-state centralized operations (Harris and Ross 1987b:202).

Aided by the

government, the meat packing business proceeded to dominate the production and

distribution of beef. Refrigeration technology and the new federal inspection standards

allowed individuals such as George H. Hammond in 1871, Gustavus Swift in 1877, and

Philip and Simeon Armour in 1882 to slaughter beef in one area of the country Chicagoand

ship it fresh to any other area of the country. Their growth and domination of the meat

packing industry resulted in the concentration of production in five companies that by World

War I handled two-thirds of all meat packing in the

One of the great technological innovations in meat packing was the assembly (or, as Jeremy Rifkin [1992] called it, 'disassembly') line. Henry Ford is generally credited with developing assembly line technology in the construction of his Model T Ford in 1913. However, even Ford said that he got his idea from watching cattle hung on conveyor belts passing from worker to worker, each assigned a specific series of cuts until the entire

animal was dismembered. The working conditions in the meat packing industry were then and remain among the worst of any industry in the country. At the turn of the century they prompted Upton Sinclair to produce his quasi-fictional account, The Jungle (1906), whose descriptions of the slaughterhouses promoted such public outrage that the government acted to regulate the meat packing industry.

The state has also heavily

subsidized our taste in beef by allowing cattlemen to graze cattle on public lands at a fraction of market costs for grazing

on private land, thus making beef

more affordable and encouraging its consumption. As early as the 1880s,

cattlemen were fencing millions of acres of public land, to which they had no

title, with the newly invented barbed

wire. In fact, at that time most of the cattle companies grazing their cattle on public land were British. After

objections to the practice were raised, the government passed the Desert Land Act of 1887, which awarded land to

anyone who improved it. The Union Cattle Company of

In 1934

Congress passed the Taylor Grazing Act, which transferred millions of acres of

public land to ranchers if they took responsibility for improving it. In 1990,

some 30,000 cattle ranchers in eleven western states grazed their cattle on 300

million acres of public land, an area equal to the fourteen East Coast states

stretching from

The victory of beef, however, was not yet complete. To appreciate the story of American beef consumption, we need to understand the role of the American government in creating the legal definition of a hamburger and the infrastructure that encouraged the spread of the automobile.

Modern Beef

Legend has it that the hamburger

was invented by accident when an

Henry Ford's

Model T began the American romance with the automobile, and the number of

Americans with cars grew enormously in the twentieth century. There are now as many

automobiles as there are licensed drivers in the

Pork, as

mentioned earlier, had always competed with beef for priority in American meat tastes. While beef was more

popular in the Northeast and the West, pork was the meat of choice in the

South; however, in the Mid-Atlantic states and the

One advantage of beef was its suitability for the outdoor grill, which became more popular as people moved into the suburbs. Suburban cooks soon discovered that pork pat-ties crumbled and fell through the grill, whereas beef patties held together better. In addition, since the USDA does not inspect pork for trichinosis because the procedure would be too expensive, it recommended cooking pork until it was gray; but that makes pork very tough. Barbecued spare ribs are one pork alternative, but they are messier, have less meat, and can't be put on a bun.

In 1946 the USDA issued a statute that defined the hamburger:

Hamburger. 'Hamburger' shall consist of chopped fresh and/or frozen beef with or without the addition of beef fat as such and/or seasonings, shall not contain more than 30 percent fat, and shall not contain added water, phosphates, binders, or extenders. Beef cheek (trimmed Beef cheeks) may be used in the preparation of hamburgers only in accordance with the conditions prescribed in paragraph (a) of this section. (Harris 1987:125)

Marvin Harris (1987:125-126) noted that we can eat ground pork and ground beef, but we can't combine them, at least if we are to call it a hamburger. Even when lean, grass-fed beef is used for hamburger and fat must be added to bind it. The fat must come

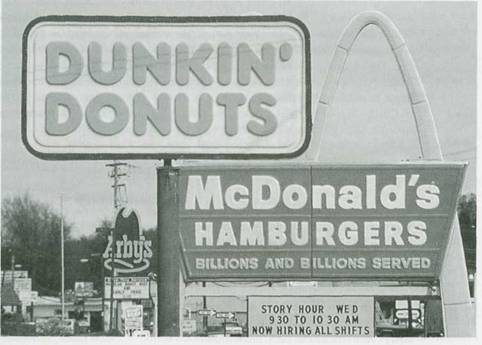

The row of fast-food restaurants that line streets in virtually every American town and city represents not only the union of sugar and fat but also the fast-paced lives required in a consumer-oriented society.

from beef scraps, not from vegetables or a different animal. This definition of the hamburger protects not only the beef industry but also the corn farmer, whose income is linked to cattle production. Moreover, it helps the fast-food industry, because the definition of hamburger allows it to use inexpensive scraps of fat from slaughtered beef to make, in fact, 30 percent of its hamburger. Thus an international beef patty was created that overcame what Harris called the 'pig's natural superiority as a converter of grain to flesh.'

The fast-food restaurant, made possible by the popularity of the automobile, put the final touch on the ascendancy of beef. Ray Kroc, the founder of McDonald's, tapped into the new temporal and work routines of American labor. With more women working outside the home, time and efficiency were wed to each other as prepared foods, snacks, and the frozen hamburger patty became more popular. In many ways, the fast-food restaurant and the beef patty on a bun were to the American working woman of the 1970s and 1980s what sugar, hot tea, and preserves were to the English female factory worker of the latter half of the nineteenth century. They both offered ease of preparation and convenience at a time when increasing numbers of women worked outside the home.

As with sugar, therefore, our 'taste' for beef goes well beyond our supposed individual food preferences. It is a consequence of a culture in which food as a commodity takes a form defined by economic, political, and social relationships. We can, as many have done, refuse beef. But to do so requires a real effort, as those who try to follow a strictly vegetarian diet can attest.

In addition,

as with much of what Americans do, the matter of beef does not stop in the

The Internationalization of the Hamburger

In the 1960s, with the help of

the World Bank, governments in South and

The state

plays a role in the production and importation of foreign beef. Foreign beef suppliers

must meet USDA certification of their herds and packing facilities and are subject to

import quotas that are more informal than formal, since the quotas themselves are in

violation of GATT. Therefore, countries from whom we import beef must

'voluntarily'

restrict sales to the

International

financial agencies, such as the World Bank, also play a role in promoting cattle

production by financing and requiring the establishment of a cattle infrastructure. For example, international

lending institutions required

to add a cattle technical extension division and the Banco Nacional de Costa Rica to add animal husbandry and veterinary sections to the branches of the bank located in cattle ranching areas. The loans themselves were used for such things as road building in cattle-raising regions and livestock improvement. In fact, the International Development Bank devoted 21 percent of its loans in the 1960s to the cattle sector of the economy. Furthermore, the U.S. Agency for International Development (USAID) helped develop roads and livestock-related extension and research agencies for Costa Rican cattle farmers.

A powerful livestock lobby

developed in

The increase in cattle production

also had environmental consequences for

The expansion of cattle raising often occurred at the expense of peasant subsistence agriculture, as cattleman evicted or forced peasants off their land or bought the land. Since cattle raising uses far less labor than agriculture, the peasants were forced into the cities, where unemployment was already high. And since cattle raising is profitable only on a large scale, the expansion led to further concentrations of wealth.

Yet if countries such as

Environmentally Sustainable Cattle Raising

Is it possible to develop ways of

producing cattle that are not environmentally destructive? This is a question that some anthropologists are

examining. Ronald Nigh, for example, has developed a project in

Nigh (1995) maintains that the

destruction of the rainforest by cattle grazing is largely the result of the importation of what he called the factory

model of agricultural production. The factory model is designed to produce

a single product (corn, soy, beef, pork, etc.) in as short a time as possible.

It tends to be technology-intensive and environmentally damaging. Furthermore, it tends to convert whole regions to a

single type of agricultural

productioncattle in one area, corn in another, wheat in another, and so on. In

Nigh suggested that it is far more productive and far less damaging to the environment to look at agriculture as an ecological, rather than a manufacturing process and to adopt what S. R. Gleissman (1988; see also Posey et al. 1984) referred to as an agroeco-logical approach. One foundation of this approach is to combine indigenous practices that have produced food yet preserved the environment with contemporary agricultural research. The major difference between a factory approach and an agroecological approach is that the latter creates a polyculturethe production of multiple crops and animalsrather than a monoculturethe growth or production of a single crop or animal. Indigenous methods of production in the rainforest create a system that enhances regeneration of land, flora, and fauna.

For example, there are sites of secondary vegetation in the Mexican rainforest left by Mayan farmers who practice swidden agriculture. The farmers clear a site, use it to grow corn for five to eight years, and then move on. These sites may soon look like the land abandoned by cattle ranchers, but Mayan farmers do not abandon the sites. They continue to work the garden, perhaps planting fruit trees, and use the site to attract mammals and birds to hunt. In fact, the area is designed to attract an animal crop and the Maya refer to it as 'garden hunting' (Nigh 1995). Since, unlike the factory model, no herbicides are used to clear the land, plant and animal life can regenerate. Thus traditional agriculture creates an environment that mixes fields, forests, and brushlands.

The idea is to create productive modules, each a mosaic of productive spaces. The agroecological model, drawing as it does on indigenous systems developed over centuries, creates an ecologically sustainable system of production modeled after natural systems, rather than a system that displaces natural ones.

Rather than

demonizing cattle, Nigh said, it is possible to design an agricultural system

modeled after indigenous systems in which cattle are integrated into an agroecological model,

one in which diversity rather than uniformity is emphasized. For example, one area

would be used for annual crops such as corn, squash, root crops, spices, and legumes.

Secondary areas, including those previously degraded by overgrazing, can be used for fruit trees, forage, and

so on. Other secondary areas, using specially selected animal breeds and grasses, can be devoted to intensive grazing. In his

project, for example, they selected a breed developed in

Exporting Pollution

We can now see how economic,

political, and social factors contribute to our patterns of consumption. The

same analysis can be applied to many other commodities that we consume in great

numbers, which have a detrimental effect on the environment. Some examples are large houses, electronic

devices, and appliances. Furthermore, there are the host of environmental

problems caused by industrial pollution, the use, storage, and disposal of nuclear energy, the mountains of garbage that

are accumulating as packaging becomes as

much of a problem as the commodities they enclose. But there is a paradox here:

While the consumption patterns of

core countries are the primary cause of environmental pollution, resource

depletion and destruction, and the production of toxic substances, the core countries suffer far less from environmental

problems than peripheral countries. The

On December

12, 1991, Lawrence Summers, then chief economist of the World Bank, later Undersecretary of

Treasury in the Clinton administration, and now President of Harvard University, sent a memorandum to some

of his colleagues, intending only, the World

Bank later said, to provoke discussion. The memo, in brief, argued that it made

perfectly good economic sense for the

Summers argued that the World Bank should encourage the movement of 'dirty industries' from core countries to less developed countries. He based his argument on three things: first, from an economic point of view, the cost of illness associated with pollution measured in working days lost is cheapest in the country with the lowest wages; second, underdeveloped countries are 'underpolluted,' and, consequently, the initial increases in pollution will have a relatively low cost; finally, since people in less developed countries have a lower life expectancy, pollutants that cause diseases of the more elderly, such as prostate cancer, are less of a concern. In sum, Summers argued that a clean environment is worth more to the inhabitants of rich countries than to those of poor countries; therefore, the cost of pollution is less in poor countries than in rich countries; consequently, it makes perfect economic sense to export 'dirty' industries to the less developed countries (Foster 1993).

The reaction

to the memo from most environmentalists was scathing. Jose Lutzen-berger,

[i]t was almost a pleasant

surprise to me to read reports in our papers and then receive [a] copy of your

memorandum supporting the export of pollution to

Yet, John Bellamy Foster (1993:12) said, there was little in the memo that has not been stated in other terms many times, largely by economists and public policy analysts.

The memo was a perfect expression of the view of the environment and of people that emerges logically from the culture of capitalism. From an anthropological perspective, the memo is illustrative of our culture's cosmology, its view of the person and the environment. The premises of Summers's argument include:

1.

The lives of people in the Third World, judged by

'foregone earnings' from illness

and death, are worth lesshundreds of times lessthan those of individuals in

ad

vanced

capitalist countries where wages are hundreds of times higher. Therefore it

makes sense to deposit

toxic wastes in less developed countries.

2. Third World

environments are underpolluted compared to places such as Los Ange

les

and

in 1989 because of air

pollution).

3. A clean

environment, in effect, is a luxury good pursued by rich countries because

of

the aesthetic and health standards in those countries. Thus the worldwide costs

of

pollution

could decrease if waste was transferred to poor countries where a clean

environment

is 'worth' less, rather than polluting environments of the rich where

a

clean environment is

'worth' more.

Essentially, the memo expresses a perspective in which a monetary value can be put on both human life, based on wage prospects, and the environment, based on the value people place on a clean environment. It reveals the tendency of the culture of capitalism to commodify virtually everything, including human life and the environment. As Foster (1993:12) said, Summers's memo is not an aberration; in his role as chief economist for the World Bank, Summers's job was to help create conditions for the accumulation of profit and to ensure economic growth. The welfare of the world's population, the health of the environment, 'nor even the fate of individual capitalists themselvescan be allowed to stand in the way of this single-minded goal.'

The Economist, in fact, went on to defend Summers, saying that governments constantly make decisions in regard to health, education, working conditions, housing, and the environment that are based on differential valuations of certain people over others. For example, in the 1980s the U.S. Office of Management and Budget (OMB) sponsored a number of studies that concluded that the value of a human life was between $500,000 and $2 million, then used those figures to argue that some forms of pollution control were cost-effective and others were not. Other economists have argued that the value of a human life should be based on earning power, thus a woman is worth less than a man, a Black's life worth less than a White's.

As shocking

as that may sound, that is exactly how we, for the most part, operate. For

example, three out of four off-site commercial hazardous landfills in southern

states were located primarily in African American communities, although African

Americans represent

only 20 percent of the population. The core countries already ship 20 million tons of waste annually to the periphery. In 1987

dioxin-laden industrial waste was shipped

from

Economists like Summers argue that it is more important to build an economic infrastructure for future generations than to protect against global warming. They compare

the cost of rainforest destruction with the economic cost of conserving it, without recognizing that rainforest destruction is irrevocable. They argue that rather than halting economic development because of global warming, countries will be able to use their newly developed riches to build retaining walls to hold back the rising sea; furthermore they argue that money spent to halt carbon dioxide output could be better spent dealing with population growth (Foster 1993:16).

Foster concluded that capitalism

will never sacrifice economic growth and capital accumulation for environmental reform. Its internal logic will always be

'let them eat pollution.'

Opposition will develop, as we shall see in later chapters, and some changes made, as have been made in the

The latest

development in American tastes for automobiles is the preference for so-called sport

utility vehicles (SUVs). In 2000 they constituted one of every two family vehicles sold (65 million in all).

These vehicles emit 57 percent more carbon dioxide than standard automobiles, are the fastest-growing source of global warming

gases in the

Where radical change is called for little is accomplished within the system and the underlying crisis intensifies over time. Today this is particularly evident in the ecological realm. For the nature of the global environmental crisis is such that the fate of the entire planet and social and ecological issues of enormous complexity are involved, all traceable to the forms of production now prevalent. It is impossible to prevent the world's environmental crisis from getting progressively worse unless root problems of production, distribution, technology, and growth are dealt with on a global scale. And the more that such questions are raised, the more it becomes evident that capitalism is unsustainableecologically, economically, politically, and morallyand must be superseded.

Conclusion

We began this chapter by asking why people choose to consume what they do, how they do, and when they do. We concluded that our tastes are largely culturally constructed and that they tend to serve the process of capital accumulation. There is no 'natural' reason why we engage in consumption patterns that do harm to the environment. Furthermore, we suggested that of all the contributing factors to environmental pollution, the most difficult to 'fix' is our consumption behavior, since it serves as the foundation of our culture.

To illustrate we examined how the American taste for sugar and fat was historically constructed largely to serve the interests of sugar and beef producers. We examined how anthropological research is trying to help peripheral countries in their effort to meet western demands for products such as beef without destroying their environmental resources.

We also examined how core countries are attempting to maintain their culture by exporting the by-productspollution and resource depletionand how from some perspectives that seems to make perfect sense.

Most important, we examined the historical and cultural dynamic that drives the consumption of specific commodities and our attitudes about the environmental damage that results, and concluded that it is not an aberration but an intrinsic part of our way of life.

|

Politica de confidentialitate | Termeni si conditii de utilizare |

Vizualizari: 2323

Importanta: ![]()

Termeni si conditii de utilizare | Contact

© SCRIGROUP 2025 . All rights reserved